The forefoot is an essential piece of Ariadne’s structural timber - a 14’ long section of her backbone. The forefoot was totally shot - full of splits and dry rot; it could be pierced all the way through with a knife at multiple points along its length.

HOME

RECENT POSTS

Replacing the forefoot

Floor Timbers

After getting Ariadne into her new home, we decided to first embark on replacing a few of her floor timbers. The floor timbers are essential structural members that tie the backbone to the rest of the boat.

Whitman Bot Redux

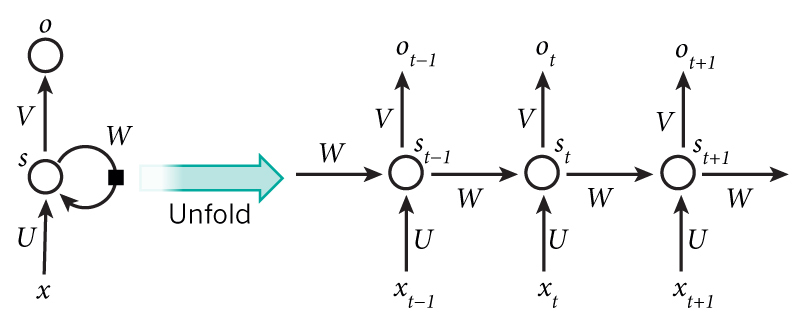

image source: canonical RNN diagram, found here

image source: canonical RNN diagram, found here

We see, in some mining surface languidly written, and we can be allow’d the forms of things, as I believe so heavy, with their way to ourselves. The new and scripture of the future, the characteristic prose of the soul which had can be continued, more than a spectacle which seem’d to be so through all his effects in a man or teacher. Have an infinite tried to be profuse and complete and only be reconsider’d, for superb and waving on the family. A long coolness of all fit thereof, (the vital west month, or born for the scene of its conditions). I have done the time on the barns, the scrups, mothers, steady, green.

- WHITMAN BOT

RNNs are amazing! While it isn’t a surprise that we can’t recreate Walt Whitman in his entirety using only a few lines of code and a GPU, we can generate highly entertaining results with a relatively small corpus and minimal training time. I had better results using a character-based approach instead of a neural net that learns a word at a time. I won’t explain the basics of what an RNN is or how it works; there are many tutorials on this subject that are quite good including the one from which the above diagram was found, and Andrej Karpathy’s excellent and now famous blog post on the subject. But long story short, RNNs have a ‘working memory’ of sorts in that the outputs are dependent not only on the immediately preceding vector of inputs, but also a history of previous inputs (of adjustable length). So for generative text, this means that the word or character that the neural net generates takes the previous context into account.

Moving and Shaking

Ariadne has been moved out of the boatyard, and into her new home - a section of cleared woods in Scott’s parents’ backyard. The moving process was relatively painless, thanks to the generosity of the local boatyard owner and his team, and to Scott and his family who built the tent that will protect the boat from the elements as she is being restored. We are now able to begin the process of taking apart pieces of the hull in the most vulnerable areas, and really assessing the scope of what we are working with.

If you like, feel free to check out a list of